CDP Private Cloud is a private data centre-based comprehensive research and data management platform. It consists of two components: CDP Private Cloud Base with CDP Private Cloud Data Services. It provides comprehensive data analytics, including artificial intelligence capabilities and secure user information and information governance.

Separate collections organize the documentation for the CDP Private Cloud, covering the various mandatory and optional components. The CDP Compute Cluster Base documentation outlines the basic features of the CDP Private Cloud. Meanwhile, specific data services such as Stores Data, Data Engineering, Computer Vision, and Management Console have their own independent documentation. The CDP Private Cloud Data Resources documentation generally offers release notes, installation, & relocation instructions for Cloudera Hortonworks Data Services.

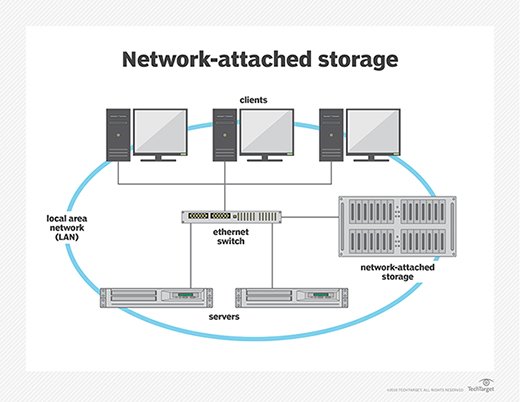

CDP Private Cloud Storage Services is a CDP offering that offers the data centre the many advantages of the public cloud.

Disaggregation of computation and storage in CDP Private Cloud allows computing & storage clusters to scale independently. The Cloudera Hortonworks Data Services offer containerized computational analytic applications that may expand dynamically and upgrade independently. CDP Personal Cloud Data Services provides layer specifies with agility and predictability by utilizing containers running on Kubernetes. Cloudera Disclosure Statement Environment (SDX) offered can compute Cluster Base cluster. It provides consistent security, governance, & metadata management for CDP Personal Cloud Data Services.

CDP Private Cloud-based data Services users can use the Management Console to quickly provision & deploy services, including Cloudera Hortonworks Information Systems, Cloudera Deep Learning, and Cloudera Data Engineering, down as needed.

To execute the Data Services with CDP Personal Cloud Data Services, you’ll need a Compute Cluster Base cluster plus container-based clusters. You can utilize a RedHat Horizon cluster or an Embedding Container Service (ECS) for the containers.

Installing Cloudera Hortonworks Management Console, registering an environment, providing information about the Data Lake setup on the Base ensemble, and establishing workloads are all part of the Private Cloud installation phase.

Components of the CDP Public Cloud Data Services

An ecosystem, a Data Lake, System Software, and Mobile Data such as Database, Machine Learning, and Data Engineering are all included in a CDP Private Data Storage Services setup.

A service for maintaining CDP is the Management Console. Users can use Operating System as a CDP administrator to manage habitats, data lakes, environment supplies, and people across all CDP services.

Environment: A logical object defines your Storage Server user account’s association with computing. You may use to provide and manage workloads like Data Warehouse & Machine Learning.

Data Lake: Encircles your data with a secure ring of protection and administration. The Data Lake software is hosted on the CDP Storage Server Base cluster in a CDP Personal Cloud deployment. Furthermore, the Data Warehousing services are shared by several workloads.

Data Warehouse: An organization can utilize this experience to set up a new data center and share a set of data with a certain team or department. End-users can access a Data Warehouse group created through the Management Console (data analysts).

Machine Learning: Using the data under management in the corporate data cloud service, teams of data scientists can develop, test, train, and then finally deploy machine learning for constructing predictive applications. End-users can access a Computer Vision workspace built through the Management Console (data scientists).

Engineering using Data

You may use this experience to build, manage, & organize Apache Spark jobs while worrying about setting up and managing Spark clusters. You can define virtual clusters with a variety of speed and RAM resources. Additionally, you can scale the cluster up or down as needed to run your Spark workloads. It helps you manage your cloud service expenditures.

Using the Embedded Container Service and the Replication Manager service, you can transfer and migrate content (HDFS & Hive external tables) across CDP Private Cloud Base to CDP Private Cloud Data Resources clusters (ECS).

CDP Compute Cluster Base is a Cloudera Data Platform on-premises version.

With additional features and enhancements throughout the stack, this new offering uses a combination of Cloudera Data Storage Hub and Hortonworks Big Data Enterprise. This unified distribution provides a scalable and adaptable platform on which you may securely execute a variety of workloads.

CDP Compute Cluster Base offers a wide range of hybrid solutions, including workloads developed by CDP Private Cloud Storage Services. Compute duties are segregated from file storage, and data can be retrieved from faraway clusters. This hybrid method manages storage, table structure, authentication, authorization, and governance, laying the groundwork for containerized applications.

CDP Private Cloud Base comprises Apache HDFS, Apache Hive 3, Apache HBase, Apache Impala, and several other components for particular applications. You can establish clusters using these technologies to meet your business needs and workloads. These are some of them:

Clusters with a regular (base) pattern

- The Data Engineering Process is used to create and deploy predictive models.

- HDFS, YARN, YARN Sequence Manager, Rangers, Atlas, Hive, Hive on Tez, Spark, Oozie, Hue, and the Data and Analytics Studio Data Mart were among the services provided.

- Interactively browse query, & explore your data.

- HDFS, Rangers, Atlas, Hive, and the Hue Operational Database among the services provided.

- For modern data-driven businesses, real-time insights are essential.

- HDFS, Ranger, Atlas, and HBase Custom Services were provided.