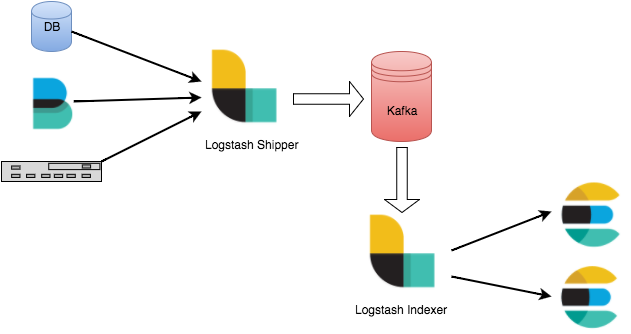

Implementing Kafka On Elk Stack

Any production problem quickly becomes frightening when your logging infrastructure becomes overwhelmed, and you cannot generate relevant data. You should employ a buffering technique if you’re sending your logs to Logstash over Beats. The ideal strategy to implement the ELK Stack to prevent log overload is to use Kafka as a buffering across from Logstash…