What exactly is a GPFS cluster?

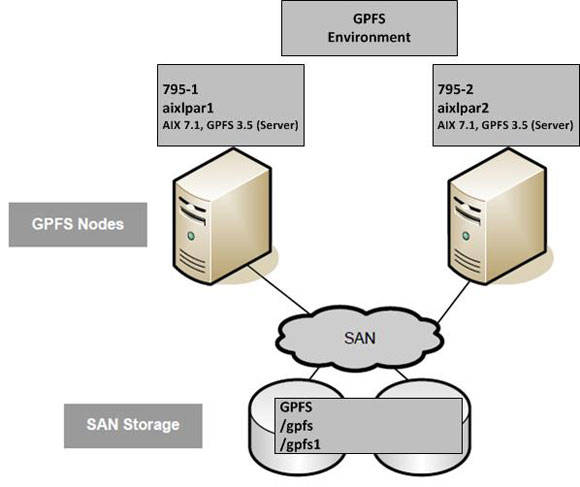

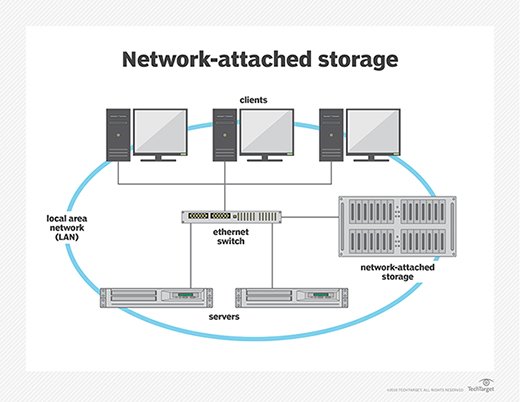

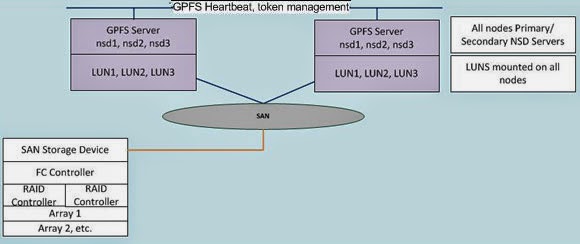

All backed node types, which include Linux, AIX®, and Windows Server, can be used in GPFS clusters. These nodes can all be connected to a single set of SAN storage or via a combination of SAN and network-connected nodes. Nodes can be in a single cluster, or information can be shared between clusters.

A GPFSTM cluster can be configured in numerous ways.

All backed node types, including Linux, AIX®, and Windows Server, can be used in GPFS clusters. These nodes can all be connected to a single set of SAN storage or via a combination of SAN and network-connected nodes. Nodes can be in a single group, or data can be shared between clusters. A cluster can be contained in a single data center or distributed across multiple data centers.

Planning the installation

Installing and configuring GPFS entails several steps that must be completed in the correct order. Before starting the setup process, go over the pre-installation and installation guidelines.

Parallel File System in General (GPFS)

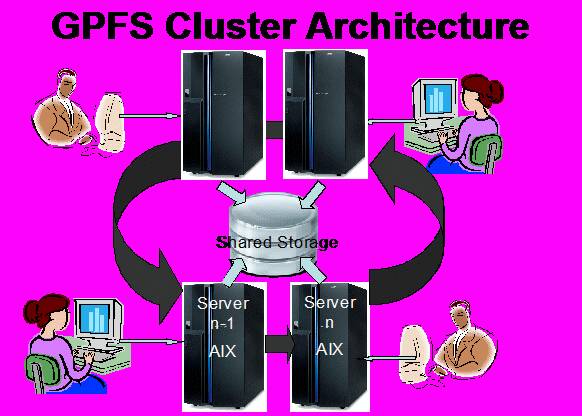

IBM’s General Parallel File System (GPFS) is an elevated clustered file plan. It could be used in distributed parallel common disc or shared nothing modes. It is used by many of the world’s largest corporations.

- GPFS enables the configuration of a highly available file system that allows concurrent access from a cluster of nodes.

- Cluster nodes can run the AIX, Linux, or Windows operating systems.

- GPFS provides high performance by stripping data blocks across multiple discs and reading and writing these blocks in parallel. It also provides block replication across multiple discs to ensure file system availability even during disc failures.

Roadmap for Pre-Installation

- Create a cluster. Examine and plan your cluster configuration.

- Examine the GPFS Requirements. Check that the following minimum requirements are met: • System requirements • Software requirements

- Set up the interface Check that you have three interface cards.

- Create a network configuration plan.

- Examine the firewalls. Before beginning the installation, ensure that all firewalls are turned off.

- Turning off SELinux. Turn off SELinux.

- Generate FQDNs and hostnames. Make the hostnames and FQDNs.

- Remote access. Check to see if all nodes are remotely logged in.

- Configuration of storage. Connect discs to all nodes.

Roadmap for installation

- Install Nodes correctly. Before installing GPFS, we should ensure that all nodes are installed correctly.

- Install the GPFS code.

- Set up the GPFS cluster.

- Assigning a license to each cluster node

- Launch GPFS and check the status of all nodes.

- Make NSDs.

- Construct file systems.

Planning

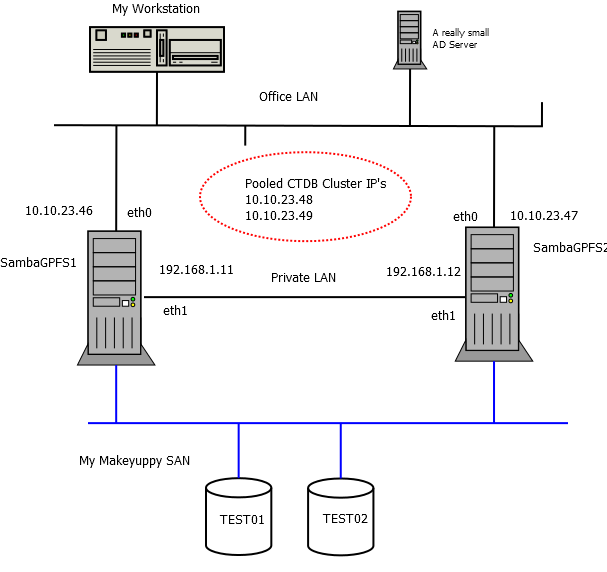

You should decide on the network topology and information transmission before installing GPFS and deploying the system.

System configuration preparing

Comprehend the quorum, quorum-manager, and nsd-node, and compute node roles. After all the requirements are met, GPFS is managed to install on all nodes.

The quorum, as well as quorum-manager nodes, are in charge of the following tasks:

- Cluster authority, management, as well as monitoring.

- Cluster creation.

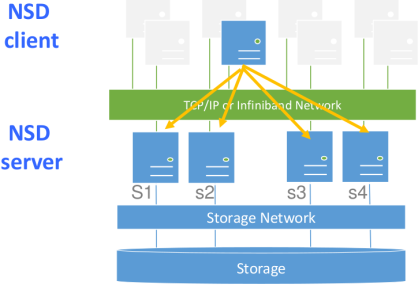

The nsd-node nodes are in charge of the following tasks:

- Developing the nsd-devices

- Producing the stanza files

- Creating data structures on the cluster.

- Combined file systems with other cluster nodes.

- Hard disc sharing on a cluster.

The compute nodes are in charge of the following tasks:

- Using the shared drive

Carrying out an Installation

- Creating a Cluster

- Creating an NSD

- Creating a File System

- Mounting a Storage Device

- A Server Design to install

- A Memory Architecture to create a file system on

- A connectivity architecture to support customer’s access to file system information

- An authentication mechanism to handle access as well as authorization to data

- An OS to operate Spectrum Scale Software on

- Spectrum Size Software

- Spectrum Size Server licenses

- Spectrum Size Cluster Quorum Devices

- Spectrum Scale Customer Permits (Optional)

- Define the installation procedure.

- The server installation is finished.

- The network installation is finished.

- Storage hardware has been installed and is available to the servers.

- The operating system has been registered and configured.

- There is GPFS software available.

- GPFS licenses have been registered and purchased.

Exchanges of information across numerous GPFS clusters

Information can be shared across multiple GPFS clusters using GPFS. After mounting a file system in another GPFS cluster, all information access would be the same if you were in the host cluster. Multiple clusters can be linked within the same data center or over vast distances via a WAN. Every cluster in a multicluster setup can be placed in a different administrative collective, simplifying management or providing a unified view of data across multiple organizations.

FAQs

What is a GPFS cluster, and why would I need one?

A GPFS cluster is a high-performance, shared-disk file system used in high-performance computing environments. It enables multiple servers to access the same storage simultaneously, facilitating efficient data management and processing. You might need a GPFS cluster if you require scalable storage solutions for demanding workloads, such as those found in scientific research, big data analytics, or AI/machine learning applications.

What are the prerequisites for setting up a GPFS cluster?

Before creating a GPFS cluster, ensure you have compatible hardware, including servers with sufficient memory, storage, and network connectivity. You’ll also need to install the required software components, such as GPFS software packages and any necessary dependencies. Additionally, plan your network infrastructure carefully to ensure low-latency, high-bandwidth communication between cluster nodes.

How do I design a GPFS cluster architecture?

Designing a GPFS cluster architecture involves determining the number of nodes (servers) in the cluster, the storage layout, network topology, and failover mechanisms. Factors such as workload requirements, performance goals, and budget constraints influence the architecture design. Consider consulting with experts or referring to best practices documentation for guidance on designing an optimal GPFS cluster architecture.

What steps are involved in creating a GPFS cluster?

Creating a GPFS cluster typically involves several steps, including installing GPFS software on each cluster node, configuring network settings, setting up shared storage, creating file systems, and configuring cluster services such as GPFS node and cluster managers. Detailed documentation provided by the GPFS vendor or community resources can guide you through each step of the process.

How do I ensure high availability and data reliability in a GPFS cluster?

High availability and data reliability in a GPFS cluster can be achieved through various means, such as redundancy, data replication, and failover mechanisms. Implementing RAID (Redundant Array of Independent Disks) configurations for storage devices, configuring GPFS mirroring for data redundancy, and setting up clustering software for automatic failover are common strategies to enhance availability and reliability.

What performance tuning options are available for optimizing a GPFS cluster?

Performance tuning of a GPFS cluster involves adjusting various parameters to maximize throughput, minimize latency, and optimize resource utilization. Some performance tuning options include adjusting GPFS file system parameters, optimizing network settings for high-speed communication, and utilizing parallel I/O techniques. Experimentation and performance testing are essential to identify the most effective tuning options for your specific workload.

How do I monitor and manage a GPFS cluster after deployment?

Monitoring and managing a GPFS cluster require ongoing attention to ensure optimal performance, availability, and data integrity. Utilize monitoring tools provided by the GPFS software vendor or third-party solutions to track cluster health, resource usage, and performance metrics. Implement proactive maintenance practices, such as regular software updates, hardware inspections, and capacity planning, to prevent issues and optimize cluster operation over time.